The Privacy Paradox: Why Americans Feel Powerless Over Their Personal Data

Your Data Is Everywhere, and Nobody Cares (Especially Not You)

Americans say they care about privacy. Surveys show that most people worry about how their personal data is collected, shared, and sold.

But when it comes to action? They do almost nothing to protect it. They skip reading privacy policies. They use platforms they don’t trust.

Most Americans assume their data is already out there, so why bother?

This is the privacy paradox — a system where people are aware of the risks but feel powerless to change anything. The more they learn about how their data is used, the more they realize how little control they actually have. And as artificial intelligence takes a bigger role in data collection, that sense of powerlessness is only going to grow.

How did we get here?

And why don’t Americans fight back?

This discussion explores the contradictions at the heart of data privacy — the illusion of consent, the failure of accountability, and the growing resignation that privacy is already a lost cause.

Why Americans Worry About Data Privacy But Feel Helpless

Most Americans care about data privacy. Almost none of them know what to do about it.

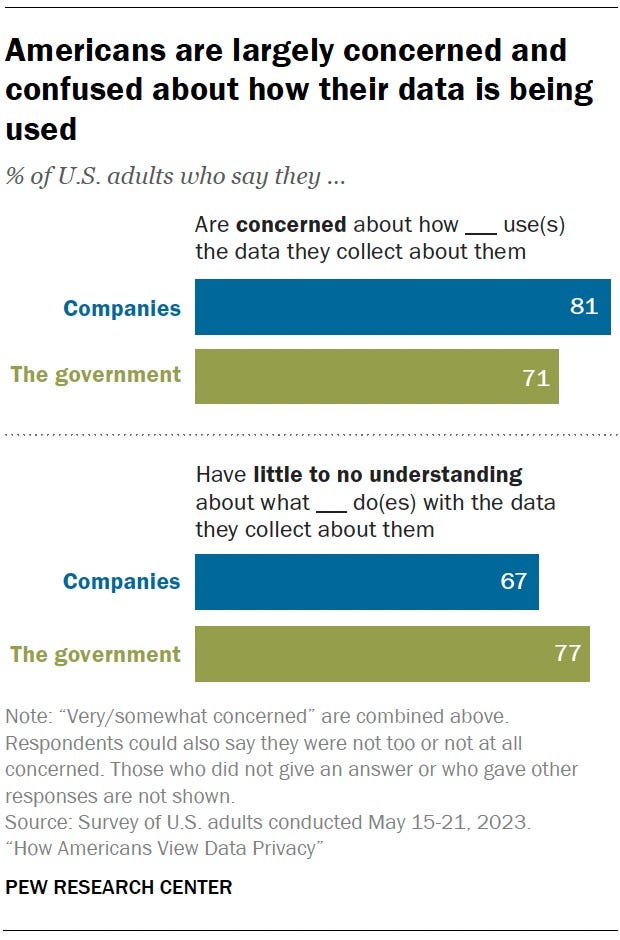

This 2023 Pew Research Center survey makes it clear:

81% worry about how private companies use their data, yet 67% admit they don’t understand what companies actually do with it.

And for government tracking — 71% are concerned, but 77% have little to no understanding of how their data is handled.

People see the problem. They just don’t have the tools to fix it.

That’s not an accident. Privacy policies aren’t written to inform — they’re written to exhaust.

- Companies bury details under pages of legalese, using phrases like “we may share your data with third parties” without saying who those third parties are.

- Government agencies? They rarely even acknowledge the full extent of their data collection. The result: people know they’re being tracked, but they don’t know by whom, how, or why.

And after a while? They stop trying to figure it out.

Massive data breaches happen all the time — Equifax, Facebook, T-Mobile. Companies apologize. They offer free credit monitoring.

Then it happens again.

Nothing changes, except the growing sense that privacy is already lost. Over time, this breeds something worse than ignorance: apathy.

Even those who do understand the risks often give up because protecting privacy is inconvenient. Adjusting settings takes effort.

- Deleting Facebook means losing connections.

- -Ditching Google? Good luck finding alternatives that work as seamlessly. The trade-off is clear: fight for privacy, or keep life simple.

Most people choose the latter.

This is the paradox: people care, but not enough to change their habits.

The system is too confusing, breaches too common, and alternatives too frustrating.

Until something forces a shift — whether it’s stronger laws or a major public reckoning — data collection will continue, and most Americans will keep pretending it doesn’t matter.

Why Most People Agree to Privacy Policies Without Reading Them

Americans technically have a choice when it comes to their data. They can read privacy policies before agreeing to them. But in practice?

They don’t.

The Pew survey shows that 56% of Americans “always” or “almost always” agree to privacy policies without reading them. Another 22% “sometimes” skip them. That means nearly 8 in 10 Americans routinely sign away their data rights without knowing what they’re agreeing to.

And companies count on that.

Privacy policies aren’t meant to be read. They’re long, vague, and steeped in technical jargon.

These documents serve one purpose: protecting companies from lawsuits, not informing users.

The result? An illusion of consent.

Legally, clicking “I agree” means you’ve made an informed decision. In reality, it’s closer to a forced choice — agree or lose access to the service. There’s no negotiation, no alternative, no way to opt out without walking away entirely.

So they click.

Even those who do care about privacy aren’t given realistic options. Reading a single privacy policy can take dozens of minutes. A 2012 study estimated that if the average American actually read every privacy policy they encountered in a year, it would take a full month of working days. That was over a decade ago — the number has only gone up.

The takeaway is clear: Americans aren’t ignoring privacy policies because they don’t care. They’re ignoring them because the system is built to be ignored.

When nearly everyone skips reading the fine print, it’s not a personal failing — it’s a sign that the system itself is broken.

Why People Don’t Trust Privacy Settings to Protect Their Data

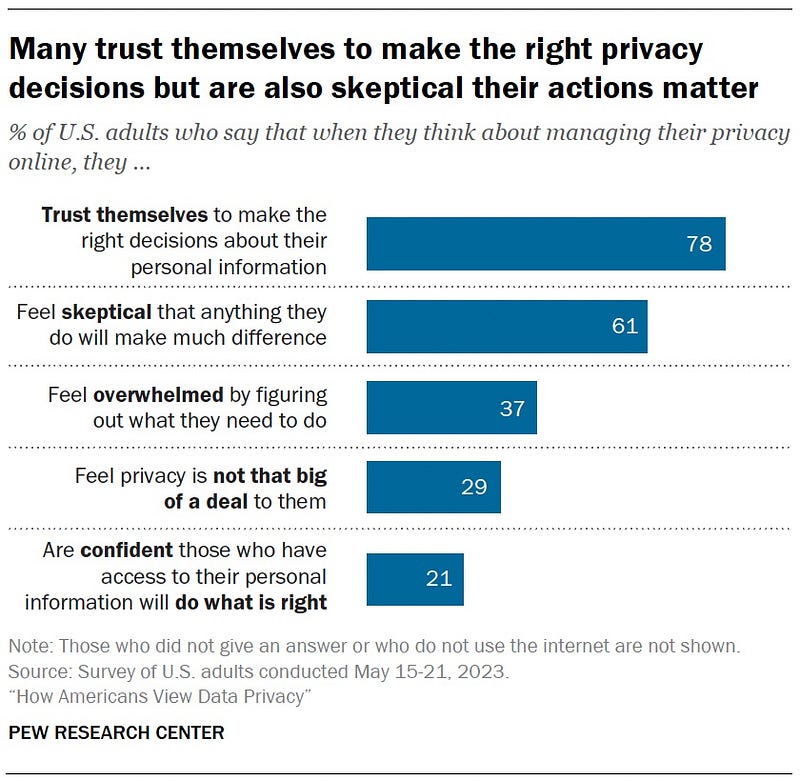

Most Americans believe they can make smart choices about their personal data — 78% say they trust themselves to make the right privacy decisions.

But when it comes to the bigger picture, that confidence disappears. 61% feel skeptical that anything they do will actually make a difference.

This is the privacy confidence trap: people think they know what they’re doing, but they don’t believe it matters. And so, they don’t act.

It’s easy to see why.

37% of Americans feel overwhelmed by privacy decisions, and it’s no mystery — figuring out how to protect personal data is intentionally complicated.

Every platform has different settings, and companies constantly tweak them to be harder to navigate. Even when people try to lock down their data, it often feels like an exercise in futility.

And trust? It’s gone. Only 21% believe that companies or institutions will do the right thing with their data.

Americans know they’re being exploited, yet most have resigned themselves to it. The system is too confusing, too relentless, and too ingrained in daily life to fight against.

For some, privacy just isn’t a priority. Nearly 3 in 10 Americans say it’s “not that big of a deal.”

Whether due to fatigue, convenience, or simply growing up in a world where privacy is an afterthought, a significant portion of the public has checked out of the conversation entirely.

When people trust themselves but not the system, when they feel overwhelmed yet indifferent, when they worry but still click “accept,” the result is predictable: nothing changes.

Americans aren’t failing to protect their privacy — they’ve been set up to fail.

Why People Don’t Trust Social Media With Their Personal Data

Americans don’t trust social media companies with their data. They just don’t expect anything to change.

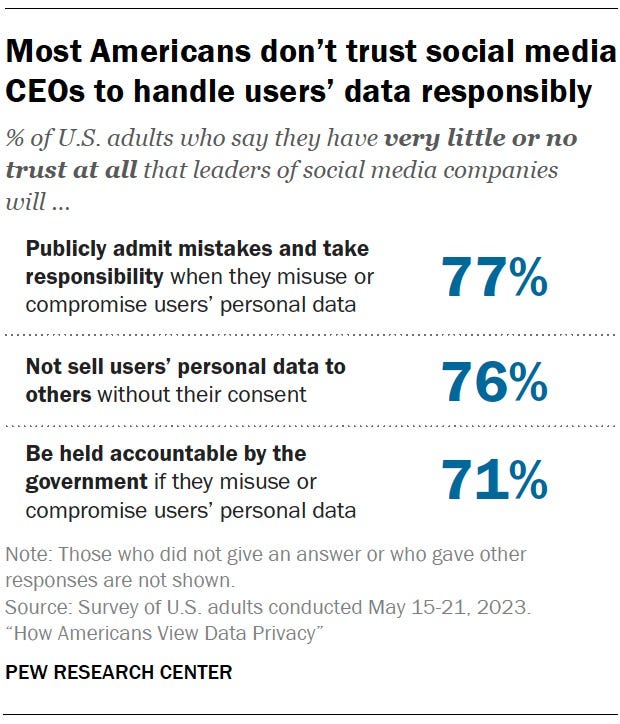

The Pew survey makes this clear:

- 77% believe social media CEOs won’t admit mistakes

- 76% don’t trust them to stop selling personal data

- 71% don’t think they’ll be held accountable by the government. This isn’t just skepticism — it’s resignation.

People aren’t waiting for tech companies to change their ways. They assume they never will.

And they’re probably right.

Social media giants don’t need public trust to survive. Facebook, Instagram, TikTok, and X (Twitter) are too ingrained in daily life. Even if people don’t trust them, they still use them.

The result? No real pressure to change. These companies know that even after the latest scandal, users will log back in. Advertisers will keep paying for eyeballs. The cycle repeats.

Meanwhile, the government hasn’t stepped in.

Americans overwhelmingly believe there’s no real oversight — just 29% think tech executives will face consequences for misusing data. That lack of enforcement fuels more inaction.

If people don’t believe regulators will protect them, why bother fighting for stricter rules?

This is where privacy violations become just another fact of life. People don’t just distrust social media companies — they’ve accepted that they can’t be stopped.

That kind of resignation is exactly what these platforms count on.

Why Privacy Experts Take More Action But Feel Less Secure

People who understand privacy risks are the most likely to take action. But they’re also the most skeptical that it matters.

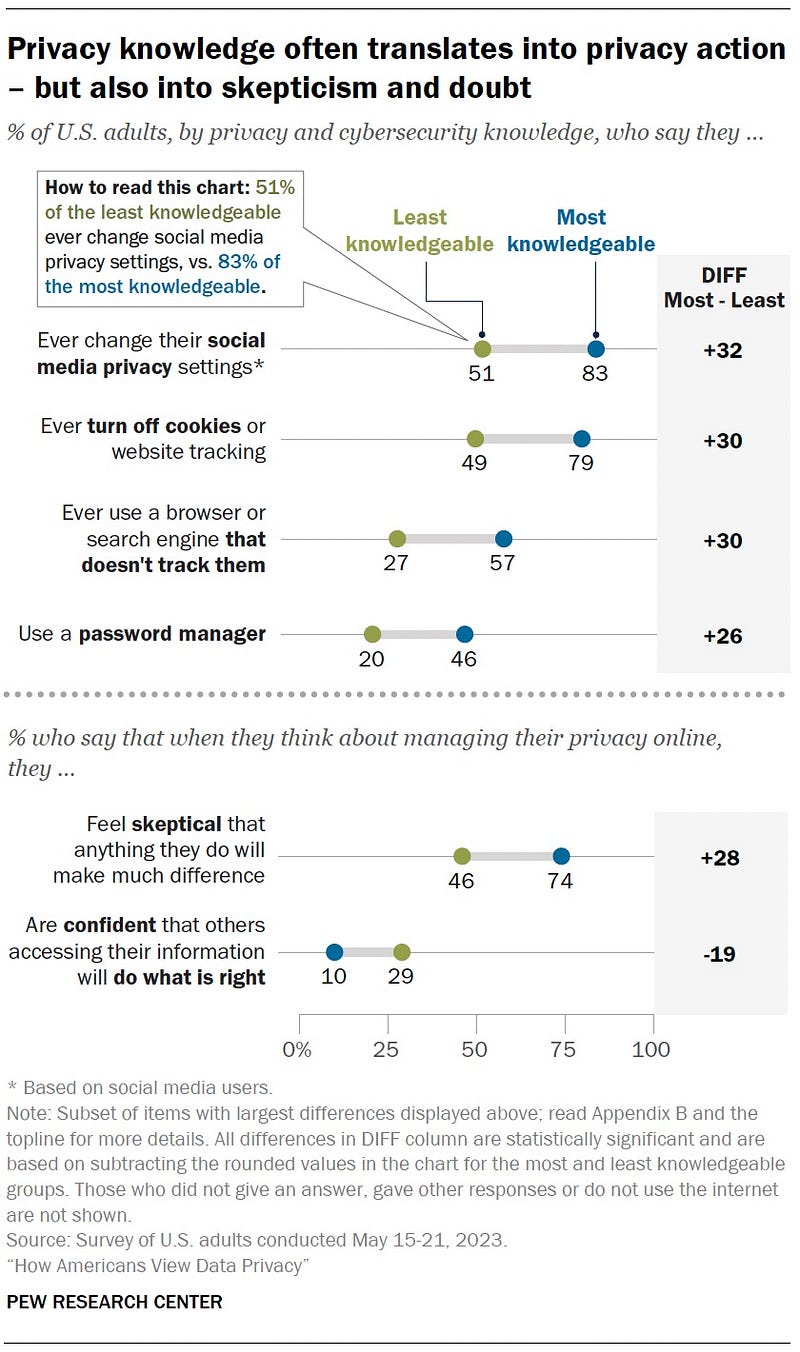

The Pew survey shows that 83% of the most knowledgeable Americans change their social media privacy settings, compared to just 51% of the least knowledgeable. They’re also far more likely to block cookies, use private browsers, and manage their passwords carefully.

On paper, this looks like a win — greater awareness leads to better privacy habits.

But here’s the problem: 74% of these same privacy-savvy individuals feel skeptical that anything they do actually makes a difference. They take precautions, but they don’t believe those precautions truly protect them.

And they might be right.

For every privacy setting, there’s a loophole. For every tracker blocked, another is running in the background. For every new regulation, there’s a workaround. The more people learn about digital surveillance, the more they realize that control is out of their hands.

This creates a troubling paradox: those who understand the system best have the least faith in it.

Their skepticism isn’t just a personal belief — it’s a sign that the system itself is broken. If the people making an effort still feel powerless, what chance does the average user have?

How AI Is Making Data Privacy Worse (And Why It Matters)

Americans already feel powerless over their data. Now, artificial intelligence is set to make that problem even worse.

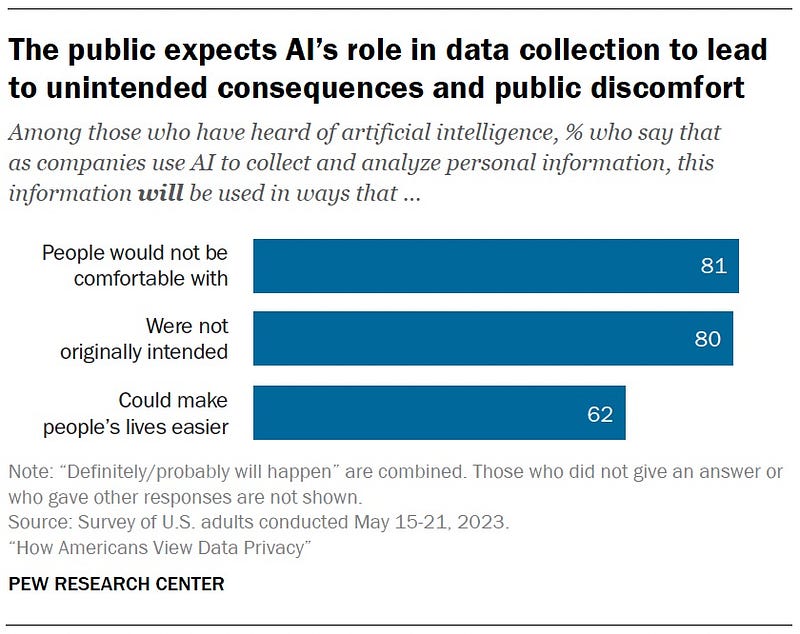

According to the Pew survey, 81% of Americans believe AI will be used in ways they’re not comfortable with, and 80% expect their personal information to be used for unintended purposes.

People see where this is going: as AI systems process more personal data, privacy risks will scale beyond human oversight.

62% also acknowledge that AI could make life easier.

This mirrors the same trade-off we’ve seen throughout the digital age — convenience over control.

AI-powered personalization, automation, and efficiency will become too useful to reject outright.

But at what cost?

If people already feel helpless in the face of corporate data collection, how will they push back against AI systems that operate in the background, learning from their every interaction?

AI won’t just track behavior — it will predict, profile, and manipulate. And if history is any guide, the public won’t be given a meaningful choice in the matter.

We’ve already accepted that privacy policies are unreadable. That surveillance is inevitable. That companies won’t change.

Now, AI threatens to accelerate all of these trends.

The question is: Will this be the moment people fight back?

Or will they, once again, just click ‘accept’?

The Truth About Data Privacy: Why the System Is Rigged Against You

Americans care about privacy, but they don’t understand it.

Even when they do, they feel powerless to change anything.

They don’t trust social media companies, they don’t trust regulators, and they don’t trust that their own actions will make a difference.

And yet, most people accept the trade-off. They click “agree.” They stay on platforms they don’t trust. They assume privacy is already lost.

AI will only deepen this cycle, automating data collection at a scale humans can’t track, let alone control.

This isn’t the result of individual failure. It’s the outcome of a system designed to extract data, obscure its use, and offer no real alternatives.

As long as companies profit from surveillance nothing will change.

Privacy shouldn’t be something people have to fight for — it should be guaranteed by default.

About the Author

Lawton is an economist who writes about markets, policy, and the forces shaping American life. His essays blend historical insight with data-driven analysis, covering everything from trade wars and inflation to labor markets and financial bubbles.

When he’s not writing about the economy, he’s making music, crafting fiction, or hanging out with his cat, Boudin.

Read more of his work on Medium: https://medium.com/@lawtonperret

Methodology

All data in this discussion comes from the Pew Research Center’s 2023 study, “How Americans View Data Privacy” (Pew Research, 2023). The study surveyed 5,101 U.S. adults from May 15 to May 21, 2023, using the American Trends Panel (ATP), a nationally representative online survey.

Pew oversampled Hispanic men, non-Hispanic Black men, and non-Hispanic Asian adults for more precise estimates, later weighting responses to match actual population proportions. The response rate was 87%, though long-term attrition resulted in a cumulative response rate of 3%.

While the study accurately reflects public attitudes toward data privacy, it is self-reported and does not measure actual behavior. Since participation was voluntary, those more interested in data privacy may be overrepresented, meaning general apathy could be even higher than reported.

Read More

How Americans View Data Privacy The share of Americans who say they are very or somewhat concerned about government use of people’s data has increased…www.pewresearch.orgTo Read All Those Web Privacy Policies, Just Take A Month Off Work : All Tech Considered : NPR